mirror of

https://gitflic.ru/project/photopea-v2/photopea-v-2.git

synced 2026-04-12 19:06:05 +00:00

Mirror "Templates" and "Plugins" community pages

This commit is contained in:

33

Updater.py

33

Updater.py

@@ -1,9 +1,12 @@

|

||||

#!/bin/python3

|

||||

|

||||

import sys

|

||||

sys.path.insert(0,"_vendor")

|

||||

|

||||

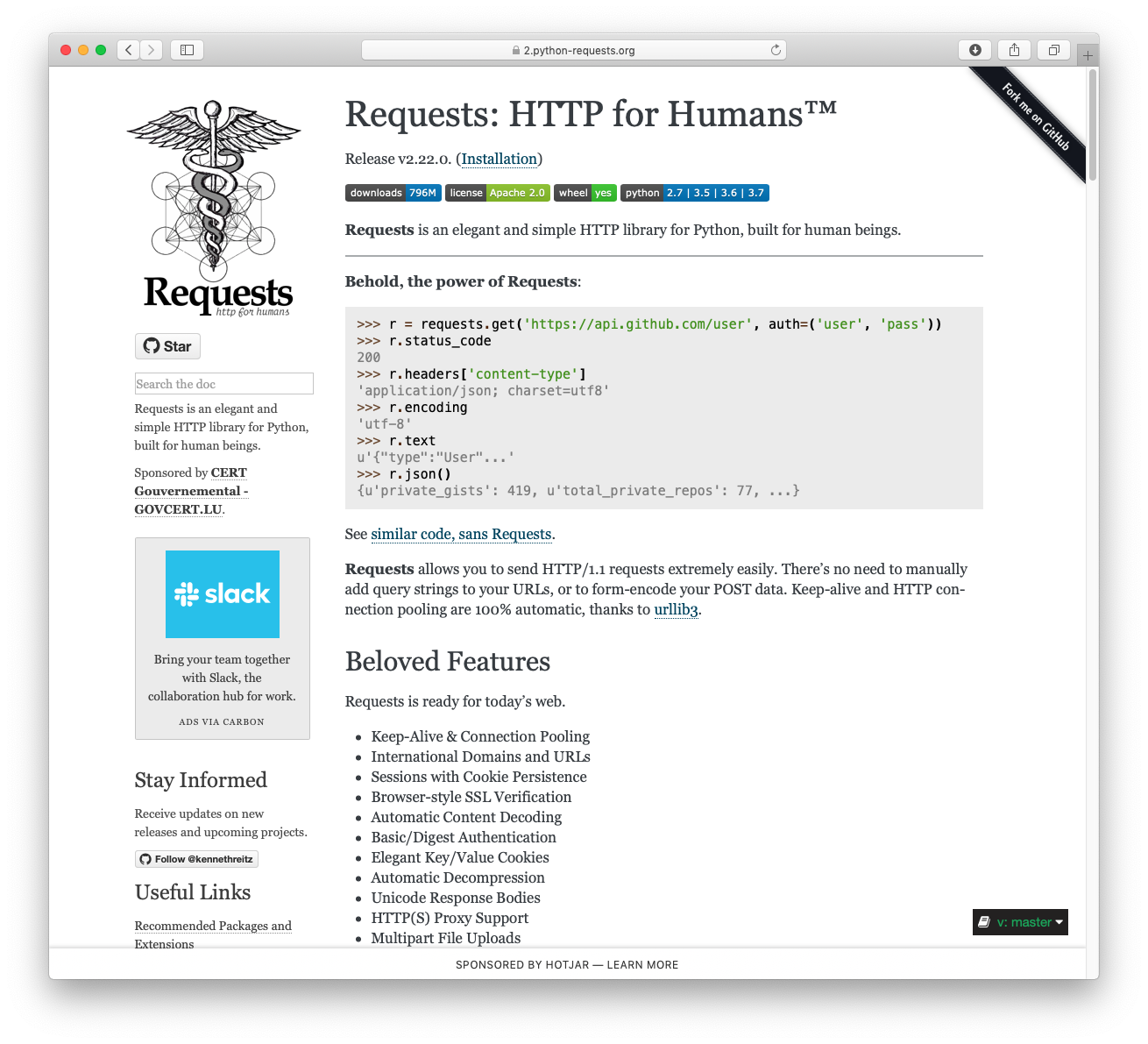

import requests

|

||||

import os, sys

|

||||

import os

|

||||

import re

|

||||

import json

|

||||

sys.path.insert(0,"_vendor")

|

||||

from tqdm import tqdm

|

||||

from dataclasses import dataclass

|

||||

import glob

|

||||

@@ -30,13 +33,29 @@ urls = [

|

||||

"code/storages/deviceStorage.html",

|

||||

"code/storages/googledriveStorage.html",

|

||||

"code/storages/dropboxStorage.html",

|

||||

"rsrc/basic/fa_basic.csh"

|

||||

"rsrc/basic/fa_basic.csh",

|

||||

"img/nft.png",

|

||||

["templates/?type=0&rsrc=","templates/?type=0.html"],

|

||||

["templates/?type=1&rsrc=","templates/?type=1.html"],

|

||||

["templates/?type=2&rsrc=","templates/?type=2.html"],

|

||||

["templates/?type=3&rsrc=","templates/?type=3.html"],

|

||||

"templates/templates.js",

|

||||

"templates/templates.css"

|

||||

]

|

||||

|

||||

|

||||

|

||||

#Update files

|

||||

def dl_file(path):

|

||||

if isinstance(path,list):

|

||||

output=path[1]

|

||||

path=path[0]

|

||||

else:

|

||||

output=path

|

||||

path=path

|

||||

outfn = root + output

|

||||

if os.path.exists(outfn):

|

||||

return

|

||||

with tqdm(desc=path, unit="B", unit_scale=True) as progress_bar:

|

||||

r = requests.get(website + path, stream=True)

|

||||

progress_bar.total = int(r.headers.get("Content-Length", 0))

|

||||

@@ -44,8 +63,8 @@ def dl_file(path):

|

||||

if r.status_code != 200:

|

||||

progress_bar.desc += "ERROR: HTTP Status %d" % r.status_code

|

||||

return

|

||||

|

||||

outfn = root + path

|

||||

|

||||

|

||||

os.makedirs(os.path.dirname(outfn), exist_ok=True)

|

||||

with open(outfn, "wb") as outf:

|

||||

for chunk in r.iter_content(chunk_size=1024):

|

||||

@@ -145,3 +164,7 @@ find_and_replace('code/storages/dropboxStorage.html', 'var redirectUri = window.

|

||||

find_and_replace('index.html','https://connect.facebook.net','')

|

||||

|

||||

find_and_replace('index.html','https://www.facebook.com','')

|

||||

|

||||

#Redirect dynamic pages to static equivalent

|

||||

find_and_replace('code/pp/pp.js','"&rsrc="','".html"')

|

||||

find_and_replace('code/pp/pp.js','"templates/?type="','"templates/%3Ftype="')

|

||||

|

||||

1

_vendor/certifi-2022.9.24.dist-info/INSTALLER

Normal file

1

_vendor/certifi-2022.9.24.dist-info/INSTALLER

Normal file

@@ -0,0 +1 @@

|

||||

pip

|

||||

21

_vendor/certifi-2022.9.24.dist-info/LICENSE

Normal file

21

_vendor/certifi-2022.9.24.dist-info/LICENSE

Normal file

@@ -0,0 +1,21 @@

|

||||

This package contains a modified version of ca-bundle.crt:

|

||||

|

||||

ca-bundle.crt -- Bundle of CA Root Certificates

|

||||

|

||||

Certificate data from Mozilla as of: Thu Nov 3 19:04:19 2011#

|

||||

This is a bundle of X.509 certificates of public Certificate Authorities

|

||||

(CA). These were automatically extracted from Mozilla's root certificates

|

||||

file (certdata.txt). This file can be found in the mozilla source tree:

|

||||

https://hg.mozilla.org/mozilla-central/file/tip/security/nss/lib/ckfw/builtins/certdata.txt

|

||||

It contains the certificates in PEM format and therefore

|

||||

can be directly used with curl / libcurl / php_curl, or with

|

||||

an Apache+mod_ssl webserver for SSL client authentication.

|

||||

Just configure this file as the SSLCACertificateFile.#

|

||||

|

||||

***** BEGIN LICENSE BLOCK *****

|

||||

This Source Code Form is subject to the terms of the Mozilla Public License,

|

||||

v. 2.0. If a copy of the MPL was not distributed with this file, You can obtain

|

||||

one at http://mozilla.org/MPL/2.0/.

|

||||

|

||||

***** END LICENSE BLOCK *****

|

||||

@(#) $RCSfile: certdata.txt,v $ $Revision: 1.80 $ $Date: 2011/11/03 15:11:58 $

|

||||

83

_vendor/certifi-2022.9.24.dist-info/METADATA

Normal file

83

_vendor/certifi-2022.9.24.dist-info/METADATA

Normal file

@@ -0,0 +1,83 @@

|

||||

Metadata-Version: 2.1

|

||||

Name: certifi

|

||||

Version: 2022.9.24

|

||||

Summary: Python package for providing Mozilla's CA Bundle.

|

||||

Home-page: https://github.com/certifi/python-certifi

|

||||

Author: Kenneth Reitz

|

||||

Author-email: me@kennethreitz.com

|

||||

License: MPL-2.0

|

||||

Project-URL: Source, https://github.com/certifi/python-certifi

|

||||

Platform: UNKNOWN

|

||||

Classifier: Development Status :: 5 - Production/Stable

|

||||

Classifier: Intended Audience :: Developers

|

||||

Classifier: License :: OSI Approved :: Mozilla Public License 2.0 (MPL 2.0)

|

||||

Classifier: Natural Language :: English

|

||||

Classifier: Programming Language :: Python

|

||||

Classifier: Programming Language :: Python :: 3

|

||||

Classifier: Programming Language :: Python :: 3 :: Only

|

||||

Classifier: Programming Language :: Python :: 3.6

|

||||

Classifier: Programming Language :: Python :: 3.7

|

||||

Classifier: Programming Language :: Python :: 3.8

|

||||

Classifier: Programming Language :: Python :: 3.9

|

||||

Classifier: Programming Language :: Python :: 3.10

|

||||

Classifier: Programming Language :: Python :: 3.11

|

||||

Requires-Python: >=3.6

|

||||

License-File: LICENSE

|

||||

|

||||

Certifi: Python SSL Certificates

|

||||

================================

|

||||

|

||||

Certifi provides Mozilla's carefully curated collection of Root Certificates for

|

||||

validating the trustworthiness of SSL certificates while verifying the identity

|

||||

of TLS hosts. It has been extracted from the `Requests`_ project.

|

||||

|

||||

Installation

|

||||

------------

|

||||

|

||||

``certifi`` is available on PyPI. Simply install it with ``pip``::

|

||||

|

||||

$ pip install certifi

|

||||

|

||||

Usage

|

||||

-----

|

||||

|

||||

To reference the installed certificate authority (CA) bundle, you can use the

|

||||

built-in function::

|

||||

|

||||

>>> import certifi

|

||||

|

||||

>>> certifi.where()

|

||||

'/usr/local/lib/python3.7/site-packages/certifi/cacert.pem'

|

||||

|

||||

Or from the command line::

|

||||

|

||||

$ python -m certifi

|

||||

/usr/local/lib/python3.7/site-packages/certifi/cacert.pem

|

||||

|

||||

Enjoy!

|

||||

|

||||

1024-bit Root Certificates

|

||||

~~~~~~~~~~~~~~~~~~~~~~~~~~

|

||||

|

||||

Browsers and certificate authorities have concluded that 1024-bit keys are

|

||||

unacceptably weak for certificates, particularly root certificates. For this

|

||||

reason, Mozilla has removed any weak (i.e. 1024-bit key) certificate from its

|

||||

bundle, replacing it with an equivalent strong (i.e. 2048-bit or greater key)

|

||||

certificate from the same CA. Because Mozilla removed these certificates from

|

||||

its bundle, ``certifi`` removed them as well.

|

||||

|

||||

In previous versions, ``certifi`` provided the ``certifi.old_where()`` function

|

||||

to intentionally re-add the 1024-bit roots back into your bundle. This was not

|

||||

recommended in production and therefore was removed at the end of 2018.

|

||||

|

||||

.. _`Requests`: https://requests.readthedocs.io/en/master/

|

||||

|

||||

Addition/Removal of Certificates

|

||||

--------------------------------

|

||||

|

||||

Certifi does not support any addition/removal or other modification of the

|

||||

CA trust store content. This project is intended to provide a reliable and

|

||||

highly portable root of trust to python deployments. Look to upstream projects

|

||||

for methods to use alternate trust.

|

||||

|

||||

|

||||

14

_vendor/certifi-2022.9.24.dist-info/RECORD

Normal file

14

_vendor/certifi-2022.9.24.dist-info/RECORD

Normal file

@@ -0,0 +1,14 @@

|

||||

certifi-2022.9.24.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

|

||||

certifi-2022.9.24.dist-info/LICENSE,sha256=oC9sY4-fuE0G93ZMOrCF2K9-2luTwWbaVDEkeQd8b7A,1052

|

||||

certifi-2022.9.24.dist-info/METADATA,sha256=33NAOmkqKTCb2u1Ys8Zth7ABWXfEuLgp-5gLp1yK_7A,2911

|

||||

certifi-2022.9.24.dist-info/RECORD,,

|

||||

certifi-2022.9.24.dist-info/WHEEL,sha256=ewwEueio1C2XeHTvT17n8dZUJgOvyCWCt0WVNLClP9o,92

|

||||

certifi-2022.9.24.dist-info/top_level.txt,sha256=KMu4vUCfsjLrkPbSNdgdekS-pVJzBAJFO__nI8NF6-U,8

|

||||

certifi/__init__.py,sha256=luDjIGxDSrQ9O0zthdz5Lnt069Z_7eR1GIEefEaf-Ys,94

|

||||

certifi/__main__.py,sha256=xBBoj905TUWBLRGANOcf7oi6e-3dMP4cEoG9OyMs11g,243

|

||||

certifi/__pycache__/__init__.cpython-310.pyc,,

|

||||

certifi/__pycache__/__main__.cpython-310.pyc,,

|

||||

certifi/__pycache__/core.cpython-310.pyc,,

|

||||

certifi/cacert.pem,sha256=3l8CcWt_qL42030rGieD3SLufICFX0bYtGhDl_EXVPI,286370

|

||||

certifi/core.py,sha256=lhewz0zFb2b4ULsQurElmloYwQoecjWzPqY67P8T7iM,4219

|

||||

certifi/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

||||

5

_vendor/certifi-2022.9.24.dist-info/WHEEL

Normal file

5

_vendor/certifi-2022.9.24.dist-info/WHEEL

Normal file

@@ -0,0 +1,5 @@

|

||||

Wheel-Version: 1.0

|

||||

Generator: bdist_wheel (0.37.0)

|

||||

Root-Is-Purelib: true

|

||||

Tag: py3-none-any

|

||||

|

||||

1

_vendor/certifi-2022.9.24.dist-info/top_level.txt

Normal file

1

_vendor/certifi-2022.9.24.dist-info/top_level.txt

Normal file

@@ -0,0 +1 @@

|

||||

certifi

|

||||

4

_vendor/certifi/__init__.py

Normal file

4

_vendor/certifi/__init__.py

Normal file

@@ -0,0 +1,4 @@

|

||||

from .core import contents, where

|

||||

|

||||

__all__ = ["contents", "where"]

|

||||

__version__ = "2022.09.24"

|

||||

12

_vendor/certifi/__main__.py

Normal file

12

_vendor/certifi/__main__.py

Normal file

@@ -0,0 +1,12 @@

|

||||

import argparse

|

||||

|

||||

from certifi import contents, where

|

||||

|

||||

parser = argparse.ArgumentParser()

|

||||

parser.add_argument("-c", "--contents", action="store_true")

|

||||

args = parser.parse_args()

|

||||

|

||||

if args.contents:

|

||||

print(contents())

|

||||

else:

|

||||

print(where())

|

||||

BIN

_vendor/certifi/__pycache__/__init__.cpython-310.pyc

Normal file

BIN

_vendor/certifi/__pycache__/__init__.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/certifi/__pycache__/__main__.cpython-310.pyc

Normal file

BIN

_vendor/certifi/__pycache__/__main__.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/certifi/__pycache__/core.cpython-310.pyc

Normal file

BIN

_vendor/certifi/__pycache__/core.cpython-310.pyc

Normal file

Binary file not shown.

4708

_vendor/certifi/cacert.pem

Normal file

4708

_vendor/certifi/cacert.pem

Normal file

File diff suppressed because it is too large

Load Diff

108

_vendor/certifi/core.py

Normal file

108

_vendor/certifi/core.py

Normal file

@@ -0,0 +1,108 @@

|

||||

"""

|

||||

certifi.py

|

||||

~~~~~~~~~~

|

||||

|

||||

This module returns the installation location of cacert.pem or its contents.

|

||||

"""

|

||||

import sys

|

||||

|

||||

|

||||

if sys.version_info >= (3, 11):

|

||||

|

||||

from importlib.resources import as_file, files

|

||||

|

||||

_CACERT_CTX = None

|

||||

_CACERT_PATH = None

|

||||

|

||||

def where() -> str:

|

||||

# This is slightly terrible, but we want to delay extracting the file

|

||||

# in cases where we're inside of a zipimport situation until someone

|

||||

# actually calls where(), but we don't want to re-extract the file

|

||||

# on every call of where(), so we'll do it once then store it in a

|

||||

# global variable.

|

||||

global _CACERT_CTX

|

||||

global _CACERT_PATH

|

||||

if _CACERT_PATH is None:

|

||||

# This is slightly janky, the importlib.resources API wants you to

|

||||

# manage the cleanup of this file, so it doesn't actually return a

|

||||

# path, it returns a context manager that will give you the path

|

||||

# when you enter it and will do any cleanup when you leave it. In

|

||||

# the common case of not needing a temporary file, it will just

|

||||

# return the file system location and the __exit__() is a no-op.

|

||||

#

|

||||

# We also have to hold onto the actual context manager, because

|

||||

# it will do the cleanup whenever it gets garbage collected, so

|

||||

# we will also store that at the global level as well.

|

||||

_CACERT_CTX = as_file(files("certifi").joinpath("cacert.pem"))

|

||||

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||

|

||||

return _CACERT_PATH

|

||||

|

||||

def contents() -> str:

|

||||

return files("certifi").joinpath("cacert.pem").read_text(encoding="ascii")

|

||||

|

||||

elif sys.version_info >= (3, 7):

|

||||

|

||||

from importlib.resources import path as get_path, read_text

|

||||

|

||||

_CACERT_CTX = None

|

||||

_CACERT_PATH = None

|

||||

|

||||

def where() -> str:

|

||||

# This is slightly terrible, but we want to delay extracting the

|

||||

# file in cases where we're inside of a zipimport situation until

|

||||

# someone actually calls where(), but we don't want to re-extract

|

||||

# the file on every call of where(), so we'll do it once then store

|

||||

# it in a global variable.

|

||||

global _CACERT_CTX

|

||||

global _CACERT_PATH

|

||||

if _CACERT_PATH is None:

|

||||

# This is slightly janky, the importlib.resources API wants you

|

||||

# to manage the cleanup of this file, so it doesn't actually

|

||||

# return a path, it returns a context manager that will give

|

||||

# you the path when you enter it and will do any cleanup when

|

||||

# you leave it. In the common case of not needing a temporary

|

||||

# file, it will just return the file system location and the

|

||||

# __exit__() is a no-op.

|

||||

#

|

||||

# We also have to hold onto the actual context manager, because

|

||||

# it will do the cleanup whenever it gets garbage collected, so

|

||||

# we will also store that at the global level as well.

|

||||

_CACERT_CTX = get_path("certifi", "cacert.pem")

|

||||

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||

|

||||

return _CACERT_PATH

|

||||

|

||||

def contents() -> str:

|

||||

return read_text("certifi", "cacert.pem", encoding="ascii")

|

||||

|

||||

else:

|

||||

import os

|

||||

import types

|

||||

from typing import Union

|

||||

|

||||

Package = Union[types.ModuleType, str]

|

||||

Resource = Union[str, "os.PathLike"]

|

||||

|

||||

# This fallback will work for Python versions prior to 3.7 that lack the

|

||||

# importlib.resources module but relies on the existing `where` function

|

||||

# so won't address issues with environments like PyOxidizer that don't set

|

||||

# __file__ on modules.

|

||||

def read_text(

|

||||

package: Package,

|

||||

resource: Resource,

|

||||

encoding: str = 'utf-8',

|

||||

errors: str = 'strict'

|

||||

) -> str:

|

||||

with open(where(), encoding=encoding) as data:

|

||||

return data.read()

|

||||

|

||||

# If we don't have importlib.resources, then we will just do the old logic

|

||||

# of assuming we're on the filesystem and munge the path directly.

|

||||

def where() -> str:

|

||||

f = os.path.dirname(__file__)

|

||||

|

||||

return os.path.join(f, "cacert.pem")

|

||||

|

||||

def contents() -> str:

|

||||

return read_text("certifi", "cacert.pem", encoding="ascii")

|

||||

0

_vendor/certifi/py.typed

Normal file

0

_vendor/certifi/py.typed

Normal file

1

_vendor/charset_normalizer-2.1.1.dist-info/INSTALLER

Normal file

1

_vendor/charset_normalizer-2.1.1.dist-info/INSTALLER

Normal file

@@ -0,0 +1 @@

|

||||

pip

|

||||

21

_vendor/charset_normalizer-2.1.1.dist-info/LICENSE

Normal file

21

_vendor/charset_normalizer-2.1.1.dist-info/LICENSE

Normal file

@@ -0,0 +1,21 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2019 TAHRI Ahmed R.

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

269

_vendor/charset_normalizer-2.1.1.dist-info/METADATA

Normal file

269

_vendor/charset_normalizer-2.1.1.dist-info/METADATA

Normal file

@@ -0,0 +1,269 @@

|

||||

Metadata-Version: 2.1

|

||||

Name: charset-normalizer

|

||||

Version: 2.1.1

|

||||

Summary: The Real First Universal Charset Detector. Open, modern and actively maintained alternative to Chardet.

|

||||

Home-page: https://github.com/ousret/charset_normalizer

|

||||

Author: Ahmed TAHRI @Ousret

|

||||

Author-email: ahmed.tahri@cloudnursery.dev

|

||||

License: MIT

|

||||

Project-URL: Bug Reports, https://github.com/Ousret/charset_normalizer/issues

|

||||

Project-URL: Documentation, https://charset-normalizer.readthedocs.io/en/latest

|

||||

Keywords: encoding,i18n,txt,text,charset,charset-detector,normalization,unicode,chardet

|

||||

Classifier: Development Status :: 5 - Production/Stable

|

||||

Classifier: License :: OSI Approved :: MIT License

|

||||

Classifier: Intended Audience :: Developers

|

||||

Classifier: Topic :: Software Development :: Libraries :: Python Modules

|

||||

Classifier: Operating System :: OS Independent

|

||||

Classifier: Programming Language :: Python

|

||||

Classifier: Programming Language :: Python :: 3

|

||||

Classifier: Programming Language :: Python :: 3.6

|

||||

Classifier: Programming Language :: Python :: 3.7

|

||||

Classifier: Programming Language :: Python :: 3.8

|

||||

Classifier: Programming Language :: Python :: 3.9

|

||||

Classifier: Programming Language :: Python :: 3.10

|

||||

Classifier: Programming Language :: Python :: 3.11

|

||||

Classifier: Topic :: Text Processing :: Linguistic

|

||||

Classifier: Topic :: Utilities

|

||||

Classifier: Programming Language :: Python :: Implementation :: PyPy

|

||||

Classifier: Typing :: Typed

|

||||

Requires-Python: >=3.6.0

|

||||

Description-Content-Type: text/markdown

|

||||

License-File: LICENSE

|

||||

Provides-Extra: unicode_backport

|

||||

Requires-Dist: unicodedata2 ; extra == 'unicode_backport'

|

||||

|

||||

|

||||

<h1 align="center">Charset Detection, for Everyone 👋 <a href="https://twitter.com/intent/tweet?text=The%20Real%20First%20Universal%20Charset%20%26%20Language%20Detector&url=https://www.github.com/Ousret/charset_normalizer&hashtags=python,encoding,chardet,developers"><img src="https://img.shields.io/twitter/url/http/shields.io.svg?style=social"/></a></h1>

|

||||

|

||||

<p align="center">

|

||||

<sup>The Real First Universal Charset Detector</sup><br>

|

||||

<a href="https://pypi.org/project/charset-normalizer">

|

||||

<img src="https://img.shields.io/pypi/pyversions/charset_normalizer.svg?orange=blue" />

|

||||

</a>

|

||||

<a href="https://codecov.io/gh/Ousret/charset_normalizer">

|

||||

<img src="https://codecov.io/gh/Ousret/charset_normalizer/branch/master/graph/badge.svg" />

|

||||

</a>

|

||||

<a href="https://pepy.tech/project/charset-normalizer/">

|

||||

<img alt="Download Count Total" src="https://pepy.tech/badge/charset-normalizer/month" />

|

||||

</a>

|

||||

</p>

|

||||

|

||||

> A library that helps you read text from an unknown charset encoding.<br /> Motivated by `chardet`,

|

||||

> I'm trying to resolve the issue by taking a new approach.

|

||||

> All IANA character set names for which the Python core library provides codecs are supported.

|

||||

|

||||

<p align="center">

|

||||

>>>>> <a href="https://charsetnormalizerweb.ousret.now.sh" target="_blank">👉 Try Me Online Now, Then Adopt Me 👈 </a> <<<<<

|

||||

</p>

|

||||

|

||||

This project offers you an alternative to **Universal Charset Encoding Detector**, also known as **Chardet**.

|

||||

|

||||

| Feature | [Chardet](https://github.com/chardet/chardet) | Charset Normalizer | [cChardet](https://github.com/PyYoshi/cChardet) |

|

||||

| ------------- | :-------------: | :------------------: | :------------------: |

|

||||

| `Fast` | ❌<br> | ✅<br> | ✅ <br> |

|

||||

| `Universal**` | ❌ | ✅ | ❌ |

|

||||

| `Reliable` **without** distinguishable standards | ❌ | ✅ | ✅ |

|

||||

| `Reliable` **with** distinguishable standards | ✅ | ✅ | ✅ |

|

||||

| `License` | LGPL-2.1<br>_restrictive_ | MIT | MPL-1.1<br>_restrictive_ |

|

||||

| `Native Python` | ✅ | ✅ | ❌ |

|

||||

| `Detect spoken language` | ❌ | ✅ | N/A |

|

||||

| `UnicodeDecodeError Safety` | ❌ | ✅ | ❌ |

|

||||

| `Whl Size` | 193.6 kB | 39.5 kB | ~200 kB |

|

||||

| `Supported Encoding` | 33 | :tada: [93](https://charset-normalizer.readthedocs.io/en/latest/user/support.html#supported-encodings) | 40

|

||||

|

||||

<p align="center">

|

||||

<img src="https://i.imgflip.com/373iay.gif" alt="Reading Normalized Text" width="226"/><img src="https://media.tenor.com/images/c0180f70732a18b4965448d33adba3d0/tenor.gif" alt="Cat Reading Text" width="200"/>

|

||||

|

||||

*\*\* : They are clearly using specific code for a specific encoding even if covering most of used one*<br>

|

||||

Did you got there because of the logs? See [https://charset-normalizer.readthedocs.io/en/latest/user/miscellaneous.html](https://charset-normalizer.readthedocs.io/en/latest/user/miscellaneous.html)

|

||||

|

||||

## ⭐ Your support

|

||||

|

||||

*Fork, test-it, star-it, submit your ideas! We do listen.*

|

||||

|

||||

## ⚡ Performance

|

||||

|

||||

This package offer better performance than its counterpart Chardet. Here are some numbers.

|

||||

|

||||

| Package | Accuracy | Mean per file (ms) | File per sec (est) |

|

||||

| ------------- | :-------------: | :------------------: | :------------------: |

|

||||

| [chardet](https://github.com/chardet/chardet) | 86 % | 200 ms | 5 file/sec |

|

||||

| charset-normalizer | **98 %** | **39 ms** | 26 file/sec |

|

||||

|

||||

| Package | 99th percentile | 95th percentile | 50th percentile |

|

||||

| ------------- | :-------------: | :------------------: | :------------------: |

|

||||

| [chardet](https://github.com/chardet/chardet) | 1200 ms | 287 ms | 23 ms |

|

||||

| charset-normalizer | 400 ms | 200 ms | 15 ms |

|

||||

|

||||

Chardet's performance on larger file (1MB+) are very poor. Expect huge difference on large payload.

|

||||

|

||||

> Stats are generated using 400+ files using default parameters. More details on used files, see GHA workflows.

|

||||

> And yes, these results might change at any time. The dataset can be updated to include more files.

|

||||

> The actual delays heavily depends on your CPU capabilities. The factors should remain the same.

|

||||

> Keep in mind that the stats are generous and that Chardet accuracy vs our is measured using Chardet initial capability

|

||||

> (eg. Supported Encoding) Challenge-them if you want.

|

||||

|

||||

[cchardet](https://github.com/PyYoshi/cChardet) is a non-native (cpp binding) and unmaintained faster alternative with

|

||||

a better accuracy than chardet but lower than this package. If speed is the most important factor, you should try it.

|

||||

|

||||

## ✨ Installation

|

||||

|

||||

Using PyPi for latest stable

|

||||

```sh

|

||||

pip install charset-normalizer -U

|

||||

```

|

||||

|

||||

If you want a more up-to-date `unicodedata` than the one available in your Python setup.

|

||||

```sh

|

||||

pip install charset-normalizer[unicode_backport] -U

|

||||

```

|

||||

|

||||

## 🚀 Basic Usage

|

||||

|

||||

### CLI

|

||||

This package comes with a CLI.

|

||||

|

||||

```

|

||||

usage: normalizer [-h] [-v] [-a] [-n] [-m] [-r] [-f] [-t THRESHOLD]

|

||||

file [file ...]

|

||||

|

||||

The Real First Universal Charset Detector. Discover originating encoding used

|

||||

on text file. Normalize text to unicode.

|

||||

|

||||

positional arguments:

|

||||

files File(s) to be analysed

|

||||

|

||||

optional arguments:

|

||||

-h, --help show this help message and exit

|

||||

-v, --verbose Display complementary information about file if any.

|

||||

Stdout will contain logs about the detection process.

|

||||

-a, --with-alternative

|

||||

Output complementary possibilities if any. Top-level

|

||||

JSON WILL be a list.

|

||||

-n, --normalize Permit to normalize input file. If not set, program

|

||||

does not write anything.

|

||||

-m, --minimal Only output the charset detected to STDOUT. Disabling

|

||||

JSON output.

|

||||

-r, --replace Replace file when trying to normalize it instead of

|

||||

creating a new one.

|

||||

-f, --force Replace file without asking if you are sure, use this

|

||||

flag with caution.

|

||||

-t THRESHOLD, --threshold THRESHOLD

|

||||

Define a custom maximum amount of chaos allowed in

|

||||

decoded content. 0. <= chaos <= 1.

|

||||

--version Show version information and exit.

|

||||

```

|

||||

|

||||

```bash

|

||||

normalizer ./data/sample.1.fr.srt

|

||||

```

|

||||

|

||||

:tada: Since version 1.4.0 the CLI produce easily usable stdout result in JSON format.

|

||||

|

||||

```json

|

||||

{

|

||||

"path": "/home/default/projects/charset_normalizer/data/sample.1.fr.srt",

|

||||

"encoding": "cp1252",

|

||||

"encoding_aliases": [

|

||||

"1252",

|

||||

"windows_1252"

|

||||

],

|

||||

"alternative_encodings": [

|

||||

"cp1254",

|

||||

"cp1256",

|

||||

"cp1258",

|

||||

"iso8859_14",

|

||||

"iso8859_15",

|

||||

"iso8859_16",

|

||||

"iso8859_3",

|

||||

"iso8859_9",

|

||||

"latin_1",

|

||||

"mbcs"

|

||||

],

|

||||

"language": "French",

|

||||

"alphabets": [

|

||||

"Basic Latin",

|

||||

"Latin-1 Supplement"

|

||||

],

|

||||

"has_sig_or_bom": false,

|

||||

"chaos": 0.149,

|

||||

"coherence": 97.152,

|

||||

"unicode_path": null,

|

||||

"is_preferred": true

|

||||

}

|

||||

```

|

||||

|

||||

### Python

|

||||

*Just print out normalized text*

|

||||

```python

|

||||

from charset_normalizer import from_path

|

||||

|

||||

results = from_path('./my_subtitle.srt')

|

||||

|

||||

print(str(results.best()))

|

||||

```

|

||||

|

||||

*Normalize any text file*

|

||||

```python

|

||||

from charset_normalizer import normalize

|

||||

try:

|

||||

normalize('./my_subtitle.srt') # should write to disk my_subtitle-***.srt

|

||||

except IOError as e:

|

||||

print('Sadly, we are unable to perform charset normalization.', str(e))

|

||||

```

|

||||

|

||||

*Upgrade your code without effort*

|

||||

```python

|

||||

from charset_normalizer import detect

|

||||

```

|

||||

|

||||

The above code will behave the same as **chardet**. We ensure that we offer the best (reasonable) BC result possible.

|

||||

|

||||

See the docs for advanced usage : [readthedocs.io](https://charset-normalizer.readthedocs.io/en/latest/)

|

||||

|

||||

## 😇 Why

|

||||

|

||||

When I started using Chardet, I noticed that it was not suited to my expectations, and I wanted to propose a

|

||||

reliable alternative using a completely different method. Also! I never back down on a good challenge!

|

||||

|

||||

I **don't care** about the **originating charset** encoding, because **two different tables** can

|

||||

produce **two identical rendered string.**

|

||||

What I want is to get readable text, the best I can.

|

||||

|

||||

In a way, **I'm brute forcing text decoding.** How cool is that ? 😎

|

||||

|

||||

Don't confuse package **ftfy** with charset-normalizer or chardet. ftfy goal is to repair unicode string whereas charset-normalizer to convert raw file in unknown encoding to unicode.

|

||||

|

||||

## 🍰 How

|

||||

|

||||

- Discard all charset encoding table that could not fit the binary content.

|

||||

- Measure chaos, or the mess once opened (by chunks) with a corresponding charset encoding.

|

||||

- Extract matches with the lowest mess detected.

|

||||

- Additionally, we measure coherence / probe for a language.

|

||||

|

||||

**Wait a minute**, what is chaos/mess and coherence according to **YOU ?**

|

||||

|

||||

*Chaos :* I opened hundred of text files, **written by humans**, with the wrong encoding table. **I observed**, then

|

||||

**I established** some ground rules about **what is obvious** when **it seems like** a mess.

|

||||

I know that my interpretation of what is chaotic is very subjective, feel free to contribute in order to

|

||||

improve or rewrite it.

|

||||

|

||||

*Coherence :* For each language there is on earth, we have computed ranked letter appearance occurrences (the best we can). So I thought

|

||||

that intel is worth something here. So I use those records against decoded text to check if I can detect intelligent design.

|

||||

|

||||

## ⚡ Known limitations

|

||||

|

||||

- Language detection is unreliable when text contains two or more languages sharing identical letters. (eg. HTML (english tags) + Turkish content (Sharing Latin characters))

|

||||

- Every charset detector heavily depends on sufficient content. In common cases, do not bother run detection on very tiny content.

|

||||

|

||||

## 👤 Contributing

|

||||

|

||||

Contributions, issues and feature requests are very much welcome.<br />

|

||||

Feel free to check [issues page](https://github.com/ousret/charset_normalizer/issues) if you want to contribute.

|

||||

|

||||

## 📝 License

|

||||

|

||||

Copyright © 2019 [Ahmed TAHRI @Ousret](https://github.com/Ousret).<br />

|

||||

This project is [MIT](https://github.com/Ousret/charset_normalizer/blob/master/LICENSE) licensed.

|

||||

|

||||

Characters frequencies used in this project © 2012 [Denny Vrandečić](http://simia.net/letters/)

|

||||

33

_vendor/charset_normalizer-2.1.1.dist-info/RECORD

Normal file

33

_vendor/charset_normalizer-2.1.1.dist-info/RECORD

Normal file

@@ -0,0 +1,33 @@

|

||||

../../bin/normalizer,sha256=qSpvGsyLwjZW3uUUIySX3JlQOmSuDLIwdxOnC53iQiU,243

|

||||

charset_normalizer-2.1.1.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

|

||||

charset_normalizer-2.1.1.dist-info/LICENSE,sha256=6zGgxaT7Cbik4yBV0lweX5w1iidS_vPNcgIT0cz-4kE,1070

|

||||

charset_normalizer-2.1.1.dist-info/METADATA,sha256=C99l12g4d1E9_UiW-mqPCWx7v2M_lYGWxy1GTOjXSsA,11942

|

||||

charset_normalizer-2.1.1.dist-info/RECORD,,

|

||||

charset_normalizer-2.1.1.dist-info/WHEEL,sha256=G16H4A3IeoQmnOrYV4ueZGKSjhipXx8zc8nu9FGlvMA,92

|

||||

charset_normalizer-2.1.1.dist-info/entry_points.txt,sha256=uYo8aIGLWv8YgWfSna5HnfY_En4pkF1w4bgawNAXzP0,76

|

||||

charset_normalizer-2.1.1.dist-info/top_level.txt,sha256=7ASyzePr8_xuZWJsnqJjIBtyV8vhEo0wBCv1MPRRi3Q,19

|

||||

charset_normalizer/__init__.py,sha256=jGhhf1IcOgCpZsr593E9fPvjWKnflVqHe_LwkOJjInU,1790

|

||||

charset_normalizer/__pycache__/__init__.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/api.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/cd.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/constant.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/legacy.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/md.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/models.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/utils.cpython-310.pyc,,

|

||||

charset_normalizer/__pycache__/version.cpython-310.pyc,,

|

||||

charset_normalizer/api.py,sha256=euVPmjAMbjpqhEHPjfKtyy1mK52U0TOUBUQgM_Qy6eE,19191

|

||||

charset_normalizer/assets/__init__.py,sha256=r7aakPaRIc2FFG2mw2V8NOTvkl25_euKZ3wPf5SAVa4,15222

|

||||

charset_normalizer/assets/__pycache__/__init__.cpython-310.pyc,,

|

||||

charset_normalizer/cd.py,sha256=Pxdkbn4cy0iZF42KTb1FiWIqqKobuz_fDjGwc6JMNBc,10811

|

||||

charset_normalizer/cli/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

||||

charset_normalizer/cli/__pycache__/__init__.cpython-310.pyc,,

|

||||

charset_normalizer/cli/__pycache__/normalizer.cpython-310.pyc,,

|

||||

charset_normalizer/cli/normalizer.py,sha256=FmD1RXeMpRBg_mjR0MaJhNUpM2qZ8wz2neAE7AayBeg,9521

|

||||

charset_normalizer/constant.py,sha256=NgU-pY8JH2a9lkVT8oKwAFmIUYNKOuSBwZgF9MrlNCM,19157

|

||||

charset_normalizer/legacy.py,sha256=XKeZOts_HdYQU_Jb3C9ZfOjY2CiUL132k9_nXer8gig,3384

|

||||

charset_normalizer/md.py,sha256=pZP8IVpSC82D8INA9Tf_y0ijJSRI-UIncZvLdfTWEd4,17642

|

||||

charset_normalizer/models.py,sha256=i68YdlSLTEI3EEBVXq8TLNAbyyjrLC2OWszc-OBAk9I,13167

|

||||

charset_normalizer/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

||||

charset_normalizer/utils.py,sha256=ykOznhcAeH-ODLBWJuI7t1nbwa1SAfN_bDYTCJGyh4U,11771

|

||||

charset_normalizer/version.py,sha256=_eh2MA3qS__IajlePQxKBmlw6zaBDvPYlLdEgxgIojw,79

|

||||

5

_vendor/charset_normalizer-2.1.1.dist-info/WHEEL

Normal file

5

_vendor/charset_normalizer-2.1.1.dist-info/WHEEL

Normal file

@@ -0,0 +1,5 @@

|

||||

Wheel-Version: 1.0

|

||||

Generator: bdist_wheel (0.37.1)

|

||||

Root-Is-Purelib: true

|

||||

Tag: py3-none-any

|

||||

|

||||

@@ -0,0 +1,2 @@

|

||||

[console_scripts]

|

||||

normalizer = charset_normalizer.cli.normalizer:cli_detect

|

||||

1

_vendor/charset_normalizer-2.1.1.dist-info/top_level.txt

Normal file

1

_vendor/charset_normalizer-2.1.1.dist-info/top_level.txt

Normal file

@@ -0,0 +1 @@

|

||||

charset_normalizer

|

||||

56

_vendor/charset_normalizer/__init__.py

Normal file

56

_vendor/charset_normalizer/__init__.py

Normal file

@@ -0,0 +1,56 @@

|

||||

# -*- coding: utf-8 -*-

|

||||

"""

|

||||

Charset-Normalizer

|

||||

~~~~~~~~~~~~~~

|

||||

The Real First Universal Charset Detector.

|

||||

A library that helps you read text from an unknown charset encoding.

|

||||

Motivated by chardet, This package is trying to resolve the issue by taking a new approach.

|

||||

All IANA character set names for which the Python core library provides codecs are supported.

|

||||

|

||||

Basic usage:

|

||||

>>> from charset_normalizer import from_bytes

|

||||

>>> results = from_bytes('Bсеки човек има право на образование. Oбразованието!'.encode('utf_8'))

|

||||

>>> best_guess = results.best()

|

||||

>>> str(best_guess)

|

||||

'Bсеки човек има право на образование. Oбразованието!'

|

||||

|

||||

Others methods and usages are available - see the full documentation

|

||||

at <https://github.com/Ousret/charset_normalizer>.

|

||||

:copyright: (c) 2021 by Ahmed TAHRI

|

||||

:license: MIT, see LICENSE for more details.

|

||||

"""

|

||||

import logging

|

||||

|

||||

from .api import from_bytes, from_fp, from_path, normalize

|

||||

from .legacy import (

|

||||

CharsetDetector,

|

||||

CharsetDoctor,

|

||||

CharsetNormalizerMatch,

|

||||

CharsetNormalizerMatches,

|

||||

detect,

|

||||

)

|

||||

from .models import CharsetMatch, CharsetMatches

|

||||

from .utils import set_logging_handler

|

||||

from .version import VERSION, __version__

|

||||

|

||||

__all__ = (

|

||||

"from_fp",

|

||||

"from_path",

|

||||

"from_bytes",

|

||||

"normalize",

|

||||

"detect",

|

||||

"CharsetMatch",

|

||||

"CharsetMatches",

|

||||

"CharsetNormalizerMatch",

|

||||

"CharsetNormalizerMatches",

|

||||

"CharsetDetector",

|

||||

"CharsetDoctor",

|

||||

"__version__",

|

||||

"VERSION",

|

||||

"set_logging_handler",

|

||||

)

|

||||

|

||||

# Attach a NullHandler to the top level logger by default

|

||||

# https://docs.python.org/3.3/howto/logging.html#configuring-logging-for-a-library

|

||||

|

||||

logging.getLogger("charset_normalizer").addHandler(logging.NullHandler())

|

||||

BIN

_vendor/charset_normalizer/__pycache__/__init__.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/__init__.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/api.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/api.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/cd.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/cd.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/constant.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/constant.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/legacy.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/legacy.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/md.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/md.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/models.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/models.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/utils.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/utils.cpython-310.pyc

Normal file

Binary file not shown.

BIN

_vendor/charset_normalizer/__pycache__/version.cpython-310.pyc

Normal file

BIN

_vendor/charset_normalizer/__pycache__/version.cpython-310.pyc

Normal file

Binary file not shown.

584

_vendor/charset_normalizer/api.py

Normal file

584

_vendor/charset_normalizer/api.py

Normal file

@@ -0,0 +1,584 @@

|

||||

import logging

|

||||

import warnings

|

||||

from os import PathLike

|

||||

from os.path import basename, splitext

|

||||

from typing import Any, BinaryIO, List, Optional, Set

|

||||

|

||||

from .cd import (

|

||||

coherence_ratio,

|

||||

encoding_languages,

|

||||

mb_encoding_languages,

|

||||

merge_coherence_ratios,

|

||||

)

|

||||

from .constant import IANA_SUPPORTED, TOO_BIG_SEQUENCE, TOO_SMALL_SEQUENCE, TRACE

|

||||

from .md import mess_ratio

|

||||

from .models import CharsetMatch, CharsetMatches

|

||||

from .utils import (

|

||||

any_specified_encoding,

|

||||

cut_sequence_chunks,

|

||||

iana_name,

|

||||

identify_sig_or_bom,

|

||||

is_cp_similar,

|

||||

is_multi_byte_encoding,

|

||||

should_strip_sig_or_bom,

|

||||

)

|

||||

|

||||

# Will most likely be controversial

|

||||

# logging.addLevelName(TRACE, "TRACE")

|

||||

logger = logging.getLogger("charset_normalizer")

|

||||

explain_handler = logging.StreamHandler()

|

||||

explain_handler.setFormatter(

|

||||

logging.Formatter("%(asctime)s | %(levelname)s | %(message)s")

|

||||

)

|

||||

|

||||

|

||||

def from_bytes(

|

||||

sequences: bytes,

|

||||

steps: int = 5,

|

||||

chunk_size: int = 512,

|

||||

threshold: float = 0.2,

|

||||

cp_isolation: Optional[List[str]] = None,

|

||||

cp_exclusion: Optional[List[str]] = None,

|

||||

preemptive_behaviour: bool = True,

|

||||

explain: bool = False,

|

||||

) -> CharsetMatches:

|

||||

"""

|

||||

Given a raw bytes sequence, return the best possibles charset usable to render str objects.

|

||||

If there is no results, it is a strong indicator that the source is binary/not text.

|

||||

By default, the process will extract 5 blocs of 512o each to assess the mess and coherence of a given sequence.

|

||||

And will give up a particular code page after 20% of measured mess. Those criteria are customizable at will.

|

||||

|

||||

The preemptive behavior DOES NOT replace the traditional detection workflow, it prioritize a particular code page

|

||||

but never take it for granted. Can improve the performance.

|

||||

|

||||

You may want to focus your attention to some code page or/and not others, use cp_isolation and cp_exclusion for that

|

||||

purpose.

|

||||

|

||||

This function will strip the SIG in the payload/sequence every time except on UTF-16, UTF-32.

|

||||

By default the library does not setup any handler other than the NullHandler, if you choose to set the 'explain'

|

||||

toggle to True it will alter the logger configuration to add a StreamHandler that is suitable for debugging.

|

||||

Custom logging format and handler can be set manually.

|

||||

"""

|

||||

|

||||

if not isinstance(sequences, (bytearray, bytes)):

|

||||

raise TypeError(

|

||||

"Expected object of type bytes or bytearray, got: {0}".format(

|

||||

type(sequences)

|

||||

)

|

||||

)

|

||||

|

||||

if explain:

|

||||

previous_logger_level: int = logger.level

|

||||

logger.addHandler(explain_handler)

|

||||

logger.setLevel(TRACE)

|

||||

|

||||

length: int = len(sequences)

|

||||

|

||||

if length == 0:

|

||||

logger.debug("Encoding detection on empty bytes, assuming utf_8 intention.")

|

||||

if explain:

|

||||

logger.removeHandler(explain_handler)

|

||||

logger.setLevel(previous_logger_level or logging.WARNING)

|

||||

return CharsetMatches([CharsetMatch(sequences, "utf_8", 0.0, False, [], "")])

|

||||

|

||||

if cp_isolation is not None:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"cp_isolation is set. use this flag for debugging purpose. "

|

||||

"limited list of encoding allowed : %s.",

|

||||

", ".join(cp_isolation),

|

||||

)

|

||||

cp_isolation = [iana_name(cp, False) for cp in cp_isolation]

|

||||

else:

|

||||

cp_isolation = []

|

||||

|

||||

if cp_exclusion is not None:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"cp_exclusion is set. use this flag for debugging purpose. "

|

||||

"limited list of encoding excluded : %s.",

|

||||

", ".join(cp_exclusion),

|

||||

)

|

||||

cp_exclusion = [iana_name(cp, False) for cp in cp_exclusion]

|

||||

else:

|

||||

cp_exclusion = []

|

||||

|

||||

if length <= (chunk_size * steps):

|

||||

logger.log(

|

||||

TRACE,

|

||||

"override steps (%i) and chunk_size (%i) as content does not fit (%i byte(s) given) parameters.",

|

||||

steps,

|

||||

chunk_size,

|

||||

length,

|

||||

)

|

||||

steps = 1

|

||||

chunk_size = length

|

||||

|

||||

if steps > 1 and length / steps < chunk_size:

|

||||

chunk_size = int(length / steps)

|

||||

|

||||

is_too_small_sequence: bool = len(sequences) < TOO_SMALL_SEQUENCE

|

||||

is_too_large_sequence: bool = len(sequences) >= TOO_BIG_SEQUENCE

|

||||

|

||||

if is_too_small_sequence:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Trying to detect encoding from a tiny portion of ({}) byte(s).".format(

|

||||

length

|

||||

),

|

||||

)

|

||||

elif is_too_large_sequence:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Using lazy str decoding because the payload is quite large, ({}) byte(s).".format(

|

||||

length

|

||||

),

|

||||

)

|

||||

|

||||

prioritized_encodings: List[str] = []

|

||||

|

||||

specified_encoding: Optional[str] = (

|

||||

any_specified_encoding(sequences) if preemptive_behaviour else None

|

||||

)

|

||||

|

||||

if specified_encoding is not None:

|

||||

prioritized_encodings.append(specified_encoding)

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Detected declarative mark in sequence. Priority +1 given for %s.",

|

||||

specified_encoding,

|

||||

)

|

||||

|

||||

tested: Set[str] = set()

|

||||

tested_but_hard_failure: List[str] = []

|

||||

tested_but_soft_failure: List[str] = []

|

||||

|

||||

fallback_ascii: Optional[CharsetMatch] = None

|

||||

fallback_u8: Optional[CharsetMatch] = None

|

||||

fallback_specified: Optional[CharsetMatch] = None

|

||||

|

||||

results: CharsetMatches = CharsetMatches()

|

||||

|

||||

sig_encoding, sig_payload = identify_sig_or_bom(sequences)

|

||||

|

||||

if sig_encoding is not None:

|

||||

prioritized_encodings.append(sig_encoding)

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Detected a SIG or BOM mark on first %i byte(s). Priority +1 given for %s.",

|

||||

len(sig_payload),

|

||||

sig_encoding,

|

||||

)

|

||||

|

||||

prioritized_encodings.append("ascii")

|

||||

|

||||

if "utf_8" not in prioritized_encodings:

|

||||

prioritized_encodings.append("utf_8")

|

||||

|

||||

for encoding_iana in prioritized_encodings + IANA_SUPPORTED:

|

||||

|

||||

if cp_isolation and encoding_iana not in cp_isolation:

|

||||

continue

|

||||

|

||||

if cp_exclusion and encoding_iana in cp_exclusion:

|

||||

continue

|

||||

|

||||

if encoding_iana in tested:

|

||||

continue

|

||||

|

||||

tested.add(encoding_iana)

|

||||

|

||||

decoded_payload: Optional[str] = None

|

||||

bom_or_sig_available: bool = sig_encoding == encoding_iana

|

||||

strip_sig_or_bom: bool = bom_or_sig_available and should_strip_sig_or_bom(

|

||||

encoding_iana

|

||||

)

|

||||

|

||||

if encoding_iana in {"utf_16", "utf_32"} and not bom_or_sig_available:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Encoding %s wont be tested as-is because it require a BOM. Will try some sub-encoder LE/BE.",

|

||||

encoding_iana,

|

||||

)

|

||||

continue

|

||||

|

||||

try:

|

||||

is_multi_byte_decoder: bool = is_multi_byte_encoding(encoding_iana)

|

||||

except (ModuleNotFoundError, ImportError):

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Encoding %s does not provide an IncrementalDecoder",

|

||||

encoding_iana,

|

||||

)

|

||||

continue

|

||||

|

||||

try:

|

||||

if is_too_large_sequence and is_multi_byte_decoder is False:

|

||||

str(

|

||||

sequences[: int(50e4)]

|

||||

if strip_sig_or_bom is False

|

||||

else sequences[len(sig_payload) : int(50e4)],

|

||||

encoding=encoding_iana,

|

||||

)

|

||||

else:

|

||||

decoded_payload = str(

|

||||

sequences

|

||||

if strip_sig_or_bom is False

|

||||

else sequences[len(sig_payload) :],

|

||||

encoding=encoding_iana,

|

||||

)

|

||||

except (UnicodeDecodeError, LookupError) as e:

|

||||

if not isinstance(e, LookupError):

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Code page %s does not fit given bytes sequence at ALL. %s",

|

||||

encoding_iana,

|

||||

str(e),

|

||||

)

|

||||

tested_but_hard_failure.append(encoding_iana)

|

||||

continue

|

||||

|

||||

similar_soft_failure_test: bool = False

|

||||

|

||||

for encoding_soft_failed in tested_but_soft_failure:

|

||||

if is_cp_similar(encoding_iana, encoding_soft_failed):

|

||||

similar_soft_failure_test = True

|

||||

break

|

||||

|

||||

if similar_soft_failure_test:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"%s is deemed too similar to code page %s and was consider unsuited already. Continuing!",

|

||||

encoding_iana,

|

||||

encoding_soft_failed,

|

||||

)

|

||||

continue

|

||||

|

||||

r_ = range(

|

||||

0 if not bom_or_sig_available else len(sig_payload),

|

||||

length,

|

||||

int(length / steps),

|

||||

)

|

||||

|

||||

multi_byte_bonus: bool = (

|

||||

is_multi_byte_decoder

|

||||

and decoded_payload is not None

|

||||

and len(decoded_payload) < length

|

||||

)

|

||||

|

||||

if multi_byte_bonus:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Code page %s is a multi byte encoding table and it appear that at least one character "

|

||||

"was encoded using n-bytes.",

|

||||

encoding_iana,

|

||||

)

|

||||

|

||||

max_chunk_gave_up: int = int(len(r_) / 4)

|

||||

|

||||

max_chunk_gave_up = max(max_chunk_gave_up, 2)

|

||||

early_stop_count: int = 0

|

||||

lazy_str_hard_failure = False

|

||||

|

||||

md_chunks: List[str] = []

|

||||

md_ratios = []

|

||||

|

||||

try:

|

||||

for chunk in cut_sequence_chunks(

|

||||

sequences,

|

||||

encoding_iana,

|

||||

r_,

|

||||

chunk_size,

|

||||

bom_or_sig_available,

|

||||

strip_sig_or_bom,

|

||||

sig_payload,

|

||||

is_multi_byte_decoder,

|

||||

decoded_payload,

|

||||

):

|

||||

md_chunks.append(chunk)

|

||||

|

||||

md_ratios.append(mess_ratio(chunk, threshold))

|

||||

|

||||

if md_ratios[-1] >= threshold:

|

||||

early_stop_count += 1

|

||||

|

||||

if (early_stop_count >= max_chunk_gave_up) or (

|

||||

bom_or_sig_available and strip_sig_or_bom is False

|

||||

):

|

||||

break

|

||||

except UnicodeDecodeError as e: # Lazy str loading may have missed something there

|

||||

logger.log(

|

||||

TRACE,

|

||||

"LazyStr Loading: After MD chunk decode, code page %s does not fit given bytes sequence at ALL. %s",

|

||||

encoding_iana,

|

||||

str(e),

|

||||

)

|

||||

early_stop_count = max_chunk_gave_up

|

||||

lazy_str_hard_failure = True

|

||||

|

||||

# We might want to check the sequence again with the whole content

|

||||

# Only if initial MD tests passes

|

||||

if (

|

||||

not lazy_str_hard_failure

|

||||

and is_too_large_sequence

|

||||

and not is_multi_byte_decoder

|

||||

):

|

||||

try:

|

||||

sequences[int(50e3) :].decode(encoding_iana, errors="strict")

|

||||

except UnicodeDecodeError as e:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"LazyStr Loading: After final lookup, code page %s does not fit given bytes sequence at ALL. %s",

|

||||

encoding_iana,

|

||||

str(e),

|

||||

)

|

||||

tested_but_hard_failure.append(encoding_iana)

|

||||

continue

|

||||

|

||||

mean_mess_ratio: float = sum(md_ratios) / len(md_ratios) if md_ratios else 0.0

|

||||

if mean_mess_ratio >= threshold or early_stop_count >= max_chunk_gave_up:

|

||||

tested_but_soft_failure.append(encoding_iana)

|

||||

logger.log(

|

||||

TRACE,

|

||||

"%s was excluded because of initial chaos probing. Gave up %i time(s). "

|

||||

"Computed mean chaos is %f %%.",

|

||||

encoding_iana,

|

||||

early_stop_count,

|

||||

round(mean_mess_ratio * 100, ndigits=3),

|

||||

)

|

||||

# Preparing those fallbacks in case we got nothing.

|

||||

if (

|

||||

encoding_iana in ["ascii", "utf_8", specified_encoding]

|

||||

and not lazy_str_hard_failure

|

||||

):

|

||||

fallback_entry = CharsetMatch(

|

||||

sequences, encoding_iana, threshold, False, [], decoded_payload

|

||||

)

|

||||

if encoding_iana == specified_encoding:

|

||||

fallback_specified = fallback_entry

|

||||

elif encoding_iana == "ascii":

|

||||

fallback_ascii = fallback_entry

|

||||

else:

|

||||

fallback_u8 = fallback_entry

|

||||

continue

|

||||

|

||||

logger.log(

|

||||

TRACE,

|

||||

"%s passed initial chaos probing. Mean measured chaos is %f %%",

|

||||

encoding_iana,

|

||||

round(mean_mess_ratio * 100, ndigits=3),

|

||||

)

|

||||

|

||||

if not is_multi_byte_decoder:

|

||||

target_languages: List[str] = encoding_languages(encoding_iana)

|

||||

else:

|

||||

target_languages = mb_encoding_languages(encoding_iana)

|

||||

|

||||

if target_languages:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"{} should target any language(s) of {}".format(

|

||||

encoding_iana, str(target_languages)

|

||||

),

|

||||

)

|

||||

|

||||

cd_ratios = []

|

||||

|

||||

# We shall skip the CD when its about ASCII

|

||||

# Most of the time its not relevant to run "language-detection" on it.

|

||||

if encoding_iana != "ascii":

|

||||

for chunk in md_chunks:

|

||||

chunk_languages = coherence_ratio(

|

||||

chunk, 0.1, ",".join(target_languages) if target_languages else None

|

||||

)

|

||||

|

||||

cd_ratios.append(chunk_languages)

|

||||

|

||||

cd_ratios_merged = merge_coherence_ratios(cd_ratios)

|

||||

|

||||

if cd_ratios_merged:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"We detected language {} using {}".format(

|

||||

cd_ratios_merged, encoding_iana

|

||||

),

|

||||

)

|

||||

|

||||

results.append(

|

||||

CharsetMatch(

|

||||

sequences,

|

||||

encoding_iana,

|

||||

mean_mess_ratio,

|

||||

bom_or_sig_available,

|

||||

cd_ratios_merged,

|

||||

decoded_payload,

|

||||

)

|

||||

)

|

||||

|

||||

if (

|

||||

encoding_iana in [specified_encoding, "ascii", "utf_8"]

|

||||

and mean_mess_ratio < 0.1

|

||||

):

|

||||

logger.debug(

|

||||

"Encoding detection: %s is most likely the one.", encoding_iana

|

||||

)

|

||||

if explain:

|

||||

logger.removeHandler(explain_handler)

|

||||

logger.setLevel(previous_logger_level)

|

||||

return CharsetMatches([results[encoding_iana]])

|

||||

|

||||

if encoding_iana == sig_encoding:

|

||||

logger.debug(

|

||||

"Encoding detection: %s is most likely the one as we detected a BOM or SIG within "

|

||||

"the beginning of the sequence.",

|

||||

encoding_iana,

|

||||

)

|

||||

if explain:

|

||||

logger.removeHandler(explain_handler)

|

||||

logger.setLevel(previous_logger_level)

|

||||

return CharsetMatches([results[encoding_iana]])

|

||||

|

||||

if len(results) == 0:

|

||||

if fallback_u8 or fallback_ascii or fallback_specified:

|

||||

logger.log(

|

||||

TRACE,

|

||||

"Nothing got out of the detection process. Using ASCII/UTF-8/Specified fallback.",

|

||||

)

|

||||

|

||||

if fallback_specified:

|

||||

logger.debug(

|

||||

"Encoding detection: %s will be used as a fallback match",

|

||||

fallback_specified.encoding,

|

||||

)

|

||||

results.append(fallback_specified)

|

||||

elif (

|

||||

(fallback_u8 and fallback_ascii is None)

|

||||

or (

|

||||

fallback_u8

|

||||

and fallback_ascii

|

||||

and fallback_u8.fingerprint != fallback_ascii.fingerprint

|

||||

)

|

||||

or (fallback_u8 is not None)

|

||||

):

|

||||

logger.debug("Encoding detection: utf_8 will be used as a fallback match")

|

||||

results.append(fallback_u8)

|

||||

elif fallback_ascii:

|

||||

logger.debug("Encoding detection: ascii will be used as a fallback match")

|

||||

results.append(fallback_ascii)

|

||||

|

||||

if results:

|

||||

logger.debug(

|

||||

"Encoding detection: Found %s as plausible (best-candidate) for content. With %i alternatives.",

|

||||

results.best().encoding, # type: ignore

|

||||

len(results) - 1,

|

||||

)

|

||||

else:

|

||||

logger.debug("Encoding detection: Unable to determine any suitable charset.")

|

||||

|

||||

if explain:

|

||||

logger.removeHandler(explain_handler)

|

||||

logger.setLevel(previous_logger_level)

|

||||

|

||||

return results

|

||||

|

||||

|

||||

def from_fp(

|

||||

fp: BinaryIO,

|

||||

steps: int = 5,

|

||||

chunk_size: int = 512,

|

||||

threshold: float = 0.20,

|

||||

cp_isolation: Optional[List[str]] = None,

|

||||

cp_exclusion: Optional[List[str]] = None,

|

||||

preemptive_behaviour: bool = True,

|

||||

explain: bool = False,

|

||||

) -> CharsetMatches:

|

||||

"""

|

||||

Same thing than the function from_bytes but using a file pointer that is already ready.

|

||||

Will not close the file pointer.

|

||||

"""

|

||||

return from_bytes(

|

||||

fp.read(),

|

||||

steps,

|

||||

chunk_size,

|

||||

threshold,

|

||||

cp_isolation,

|

||||

cp_exclusion,

|

||||

preemptive_behaviour,

|

||||

explain,

|

||||

)

|

||||

|

||||

|

||||

def from_path(

|

||||

path: "PathLike[Any]",

|

||||

steps: int = 5,

|

||||

chunk_size: int = 512,

|

||||

threshold: float = 0.20,

|

||||

cp_isolation: Optional[List[str]] = None,

|

||||

cp_exclusion: Optional[List[str]] = None,

|

||||

preemptive_behaviour: bool = True,

|

||||

explain: bool = False,

|

||||

) -> CharsetMatches:

|

||||

"""

|

||||

Same thing than the function from_bytes but with one extra step. Opening and reading given file path in binary mode.

|

||||

Can raise IOError.

|

||||

"""

|

||||

with open(path, "rb") as fp:

|

||||

return from_fp(

|

||||

fp,

|

||||

steps,

|

||||

chunk_size,

|

||||

threshold,

|

||||

cp_isolation,

|

||||

cp_exclusion,

|

||||

preemptive_behaviour,

|

||||

explain,

|

||||

)

|

||||

|

||||

|

||||

def normalize(

|

||||

path: "PathLike[Any]",

|

||||

steps: int = 5,

|

||||

chunk_size: int = 512,

|

||||

threshold: float = 0.20,

|

||||

cp_isolation: Optional[List[str]] = None,

|

||||

cp_exclusion: Optional[List[str]] = None,

|

||||

preemptive_behaviour: bool = True,

|

||||

) -> CharsetMatch:

|

||||

"""

|

||||

Take a (text-based) file path and try to create another file next to it, this time using UTF-8.

|

||||

"""

|

||||

warnings.warn(

|

||||